The field of image generation moves quickly. Though the diffusion models used by popular tools like Midjourney and Stable Diffusion may seem like the best we’ve got, the next thing is always coming — and OpenAI might have hit on it with “consistency models,” which can already do simple tasks an order of magnitude faster than the likes of DALL-E.

The paper was put online as a preprint last month, and was not accompanied by the understated fanfare OpenAI reserves for its major releases. That’s no surprise: this is definitely just a research paper, and it’s very technical. But the results of this early and experimental technique are interesting enough to note.

Consistency models aren’t particularly easy to explain, but make more sense in contrast to diffusion models.

In diffusion, a model learns how to gradually subtract noise from a starting image made entirely of noise, moving it closer step by step to the target prompt. This approach has enabled today’s most impressive AI imagery, but fundamentally it relies on performing anywhere from ten to thousands of steps to get good results. That means it’s expensive to operate and also slow enough that real-time applications are impractical.

The goal with consistency models was to make something that got decent results in a single computation step, or at most two. To do this, the model is trained, like a diffusion model, to observe the image destruction process, but learns to take an image at any level of obscuration (i.e. with a little information missing or a lot) and generate a complete source image in just one step.

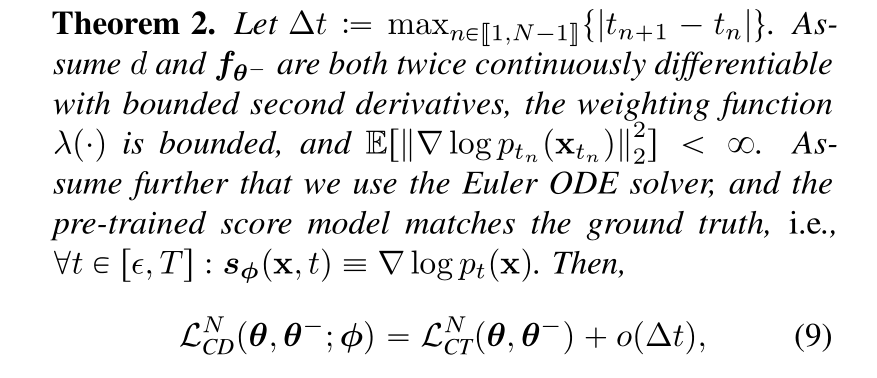

But I hasten to add that this is only the most hand-wavy description of what’s happening. It’s this kind of paper:

A representative excerpt from the consistency paper.

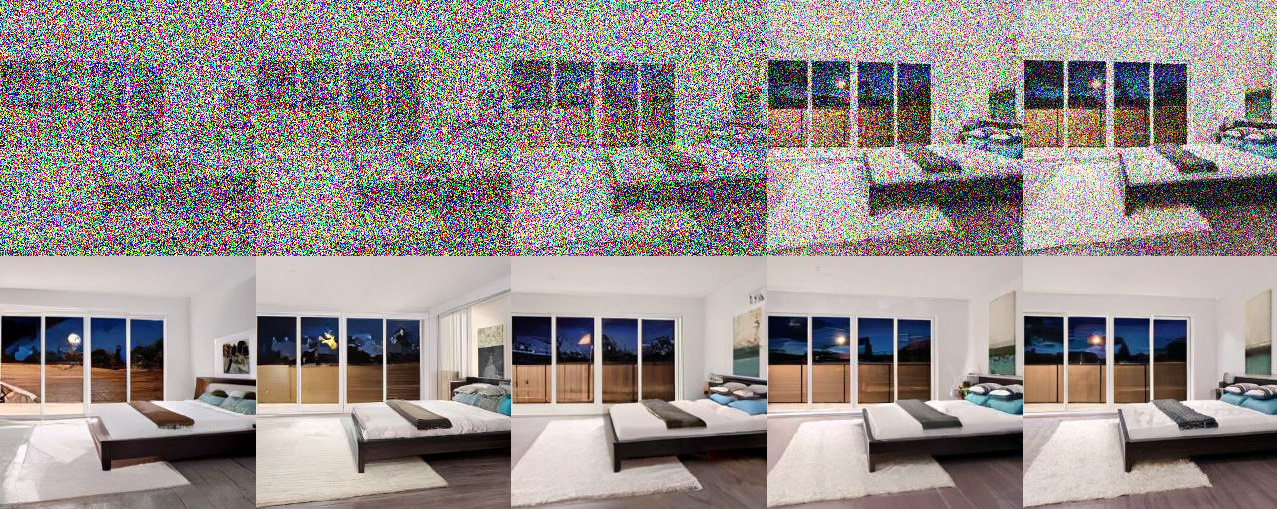

The resulting imagery is not mind-blowing — many of the images can hardly even be called good. But what matters is that they were generated in a single step rather than a hundred or a thousand. Furthermore, the consistency model generalizes to diverse tasks like colorizing, upscaling, sketch interpretation, infilling, and so on, also with a single step (though frequently improved by a second).

Whether the image is mostly noise or mostly data, consistency models go straight to a final result.

This matters, first, because the pattern in machine learning research is generally that someone establishes a technique, someone else finds a way to make it work better, then others tune it over time while adding computation to produce drastically better results than you started with. That’s more or less how we ended up with both modern diffusion models and ChatGPT. This is a self-limiting process because practically you can only dedicate so much computation to a given task.

What happens next, though, is a new, more efficient technique is identified that can do what the previous model did, way worse at first but also way more efficiently. Consistency models demonstrate this, though it is still early enough that they can’t be directly compared to diffusion ones.

But it matters at another level because it indicates how OpenAI, easily the most influential AI research outfit in the world right now, is actively looking past diffusion at the next-generation use cases.

Yes, if you want to do 1500 iterations over a minute or two using a cluster of GPUs, you can get stunning results from diffusion models. But what if you want to run an image generator on someone’s phone without draining their battery, or provide ultra-quick results in, say, a live chat interface? Diffusion is simply the wrong tool for the job, and OpenAI’s researchers are actively searching for the right one — including Ilya Sutskever, a well known name in the field, not to downplay the contributions of the other authors, Yang Song, Prafulla Dhariwal, and Mark Chen.

Whether consistency models are the next big step for OpenAI or just another arrow in its quiver — the future is almost certainly both multimodal and multi-model — will depend on how the research plays out. I’ve asked for more details and will update this post if I hear back from the researchers.